The game of semantic promises (Or, why you are not using Siri)

Natural language interfaces must go all the way

One can distinguish between the content of a message and the implications of the language used for such a message. If I say: “Good morning”, content-wise I communicate my intention to greet, but speech-act-wise I promise that I speak English. If I say “PK♥♦”, I state the fact that I can be understood in the formal language of a zip file.

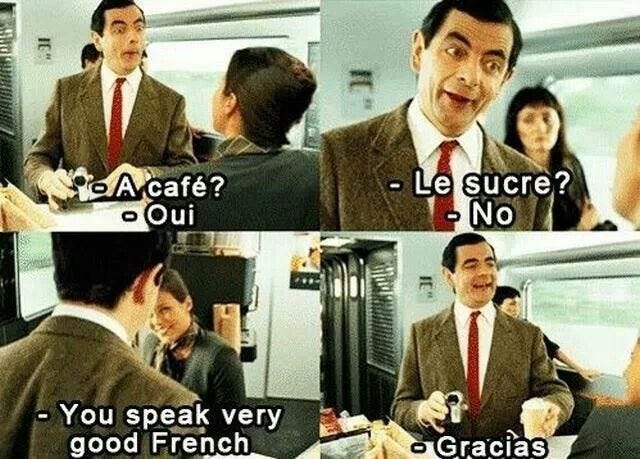

And when I say "Ein Milchkaffee, bitte" here in Berlin, I imply that I will be able to handle a follow-up question in German. If my bluff is called and I can’t deliver, it’s a disappointing day for all the involved parties; disappointing enough that any hint of an accent or awkwardness of delivery is enough for many a local barista to switch immediately to English. Language learners hate this, but we also try to be conscious of the fact that no one wants to be an unpaid language tutor.

Let’s unpack this a little. An exchange of such messages is not completely free, and the transaction costs are high enough to make failed communication frustrating. The proposed language of communication is a promise to handle whatever semantics the language can express; it’s a bad situation when it’s a bluff that was called.

Now if I craft a complex sentence for you to handle, and you completely fail at understanding it, it’s annoying. If you know you don’t understand it, at least it’s something to work with. But if you grossly simplify it, misunderstand it stupidly, and still pretend that you completely understood it, that’s a communication disaster that sometimes takes a lot of patience to untangle.

We could distinguish between the German language proper, and the Tourist one (actually, it’s maybe more often expats, but “tourist” is the derogatory of choice for us anyway). Tourist is a subset of the German language that the particular tourist/expat understands; those subsets are quite similar among tourists (and expats) with a lot of memes enumerating the precise sets of phrases.

So there’s a choice for both parties between trying (and perhaps bluffing) to use German, or “simply” using English or some kind of German-like baby-talk. This game has two equilibria: either the expats aspire to speak real German (and painfully fail at that for many years), or everyone immediately switches to some kind of a terrible common denominator German-English for interactions with people they don’t know. Now, in many cases the second outcome seems unpalatable: I read a story once about Quebec(?) trying to avoid it by fiat (I can’t find the eloquent source). In Berlin, I think it’s more of a question of etiquette, enforced by the locals being both very reluctant to speak English and quite demanding of one’s German.

This might be the better equilibrium (thanks @berlinauslandermemes)

Let’s talk now about the natural language interfaces. Remember when you tried Siri, and you almost immediately realized it doesn’t really understand English? It guesses things with a recognizable predefined grammar, but can’t handle much more. Siri’s is not so much a “Natural Language” interface, but a “Small Subset of English” one. If one’s patient enough to learn this sub-language, one adapts to this small subset, learns the few sentences that Siri can understand, and avoids the rest. The visceral aversion to further communication failures makes any incremental improvements of Siri much harder to adopt.

ChatGPT, of course, is a different beast. It does seem to understand the language quite well, as long as you’re simply chatting with it. The problem, however, is when it is integrated as an interface to another system. We see a lot of systems being built with a chat interface, that is supposed to be fluent and comfortable. But those systems can’t actually handle everything ChatGPT can figure out for them. ChatGPT parses the requests alright, but there are simply not enough APIs, or a massive mismatch of the execution model, to express this formally in the system’s language.

Natural Language Interface is a huge promise to understand, and handle, whatever can be expressed in natural language. It’s often not more than a bluff. And if the user calls it, he knows that there’s no “natural language” at play here, and he would have to learn the actual sub-language of the system to be able to use it productively.

It is quite often not worth it for the user to learn this sub-language. Not only it’s inferior and a bit annoying to learn, but it’s also most of the time very badly defined. It’s undefined because the developers (or rather, the product people who order them around) are often in denial about even the existence of this sub-language. And because of that, the sub-language changes a lot without any advance warning1 or, in some cases, even any control from the developers themselves.

And the worst of it is that an AI interface often can’t even recognize, or is programmed to not recognize, the situation when it can’t actually fully and completely “understand”, or effectively execute, a complex request. Such situations make those interfaces inherently untrustworthy and hard to steer, because often you’re not completely aware of the gross simplification that is happening, or have no clear means to fix it. Avoiding a situation like this is the reason, I believe, many people reach for a normal, traditional UI when they have an important and non-trivial task.

Salami’s bet is that Natural Language Interfaces are all-or-nothing, and it’s not enough to simply throw badly done “function-calling” at an LLM to match the expected semantics. We want to go against the dystopia of weak pseudo-natural sub-languages and make sure the largest possible expressible space is available to the users.

Once I learned to shut up Alexa’s timer using “Thank you” and used the timer a lot. It was an interesting example of performativity of language. Then they changed the thank-you handler to respond politely and keep the timer screaming. I figured out I could just say “Alexa, shut up”, but I don’t really want to, so I ended up not using it at all anymore